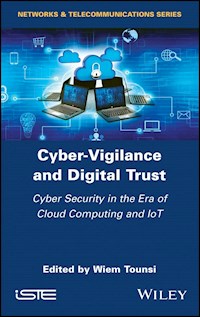

Cyber-Vigilance and Digital Trust ebook

599,99 zł

Dowiedz się więcej.

- Wydawca: John Wiley & Sons

- Kategoria: Literatura popularnonaukowa

- Język: angielski

Cyber threats are ever increasing. Adversaries are getting more sophisticated and cyber criminals are infiltrating companies in a variety of sectors. In today's landscape, organizations need to acquire and develop effective security tools and mechanisms - not only to keep up with cyber criminals, but also to stay one step ahead. Cyber-Vigilance and Digital Trust develops cyber security disciplines that serve this double objective, dealing with cyber security threats in a unique way. Specifically, the book reviews recent advances in cyber threat intelligence, trust management and risk analysis, and gives a formal and technical approach based on a data tainting mechanism to avoid data leakage in Android systems

Ebooka przeczytasz w aplikacjach Legimi na:

Liczba stron: 361

Rok wydania: 2019